- Microsoft recently launched an AI tool called VALL-E that can create highly realistic replications of people’s voices.

- The Microsoft VALL-E AI is able to generate content using only a 3-second recording as a prompt.

- One of the key features that differentiates VALL-E from other AI models is its ability to replicate the emotions of a speaker, enabling it to create highly convincing audio replications.

Microsoft has developed a new text-to-speech artificial intelligence model, called VALL-E, that can mimic a person’s voice in just three seconds. VALL-E is able to generate audio of a person saying anything once it has learned a specific voice, and its ability to accurately mimic voices stunning. Should we all be worried?

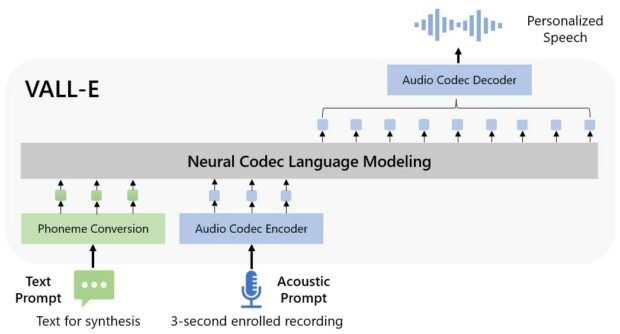

How Does The Microsoft VALL-E AI Work?

The researchers at Microsoft refer to VALL-E as a “neural codec language model.” It utilizes Meta‘s EnCodec, an AI-powered audio compression technique, to create its functionality. Unlike traditional text-to-speech tools, which synthesize speech by manipulating waveforms, VALL-E analyzes the unique characteristics of a person’s voice and decomposes them into individual components known as “tokens”. It then uses this training data to generate the final waveform.

This allows VALL-E to make different voices and sounds for different things like audiobooks, videos and more. It’s kind of like a magic wand for making computer voices sound better. The final result is a new way of making computer voices sound more like real people.

Why Does The VALL-E AI Sound So Human-Like And Natural?

Unlike other text-to-speech or AI-powered speech tools, VALL-E sounds very natural, and almost human. How was Microsoft able to achieve this?

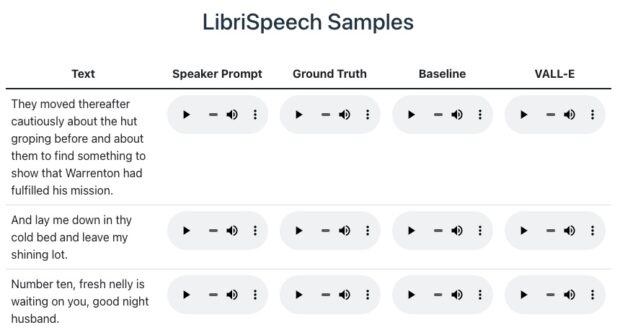

Microsoft trained VALL-E’s speech synthesis capabilities on 60,000 hours of English speech from over 7,000 speakers, using an audio library called Libri-light assembled by Meta. The quality of the audio generated by VALL-E is directly proportional to how closely the three-second sample matches the training data.

Cornell University published a paper on how they were able to use VALL-E to synthesize several voices and Microsoft posed some incredible samples on GitHub. Take a listen for yourself. The quality varies and some samples sound natural while others may be more robotic.

VALL-E AI Can Even Replicate Emotions

VALL-E sets itself apart from other AI tools with its capability of reproducing the emotion and tone of a speaker, even when generating audio of words that were never spoken by the original speaker. In addition, it also able to replicate the acoustic environment, creating an authentic and realistic imitation of the speaker.

The VALL-E AI has been trained on more than 60,000 hours of English speaking audio and can replicate emotion as well as natural sounds. #artificialintelligence #valle #ai #aivoice #voiceai #voicehackers Share on XShould Voice Actors Be Concerned?

As technology advances, voice actors may face competition from VALL-E and similar technologies. While this may result in loss of work for some, it is likely that companies will adopt these technologies if they are able to replace the need for human voice actors in areas such as audio books.

Apple is already reportedly working on using voice AI tools for audio book narration. Should voice over professionals be worried?

“AI is at its infancy, but growing rapidly. We’re at a seminal moment, but AI voice tech doesn’t have to be scary for voice actors,” revealed Bonnie Optekman, a non-union voice over artist in New York City. “There might be some lost work but there is also new opportunity. Voice actors should be educating themselves now on what future the opportunities are, what compensation should be, and how to protect themselves.”

How Easy Would It Be To Steal Your Voice?

If you aren’t already a little concerned, you should be. Even Microsoft is concerned. That’s why they have not made VALL-E available like ChatGPT.

Just imagine what someone could do with one of your voicemails. In the wrong hands, VALL-E can be used to….

- Create authentic-sounding spam calls from people you know.

- Fabricate scandalous recordings of politicians or other public figures, like celebrities.

- Call your boss and quit your job.

- End a relationship.

- Place financial transactions. Many banks use voice confirmation. That will obviously have to change very quickly.

Are You Worried About AI Voice Hackers?

How concerned are you about AI voice hackers? What do you think criminals or your enemies would do with your voice if they could recreate it? Let us know in the comments.

Three seconds, that's all it takes for Microsoft's new text-to-speech VALL-E AI technology to mimic a person's voice. Should you be worried? #artificialintelligence #valle #ai #aivoice #voiceai #voicehackers Share on XT

Frank Wilson is a retired teacher with over 30 years of combined experience in the education, small business technology, and real estate business. He now blogs as a hobby and spends most days tinkering with old computers. Wilson is passionate about tech, enjoys fishing, and loves drinking beer.

How Farmers Use Colored ‘Pond Dye’ To Keep Water Safe From Algae

How Farmers Use Colored ‘Pond Dye’ To Keep Water Safe From Algae

Leave a Reply