Google‘s new Google-Extended tool allows website publishers to opt-out of having their content used to train AI models while still allowing their sites to be accessible through Google Search.

Publishers can still be scraped and indexed by crawlers such as Googlebot. However, their data will not be used to train AI models like Bard (Google’s AI chatbot) and Vertex AI (Google’s machine learning platform).

Google says that it introduced these new AI crawling capabilities in response to feedback from website owners, who wanted “greater choice and control over how their content is used” to train AI models. The company says that it is committed to providing transparency and control to website owners, and that Google-Extended is an important step in that direction.

Google-Extended: Web Publishers Can Now Opt Out of AI Model Training #GoogleExtended #GoogleBot #AI #AISEO #SEO #Google #GoogleBard #VertexAI #RobotsTXT Share on XHow To Use Google-Extended

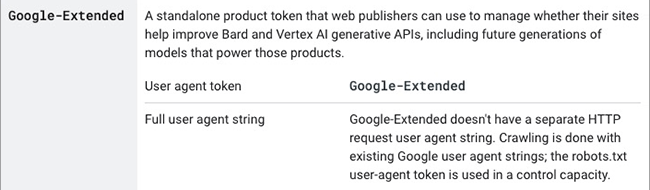

If you manage a website, it’s easy to use Google-Extended. The new feature is implemented through a robots.txt file. Web publishers use these text files to tell search engine crawlers which pages on a website they can and cannot access. Website owners can simply add a few lines of code to their robots.txt file to block Google’s AI tools from crawling their websites.

More information on how to write the code that controls Google-Extended can be found on the company’s overview of Google crawlers and fetchers page.

An Opt-Out For Publishers From Becoming AI Training Data

Google-Extended is a welcome development for website owners who want more control over how their content is used to train AI models. It is also a sign that Google is committed to providing transparency and control to website owners in the era of AI.

Here’s a copy of the full statement from Google:

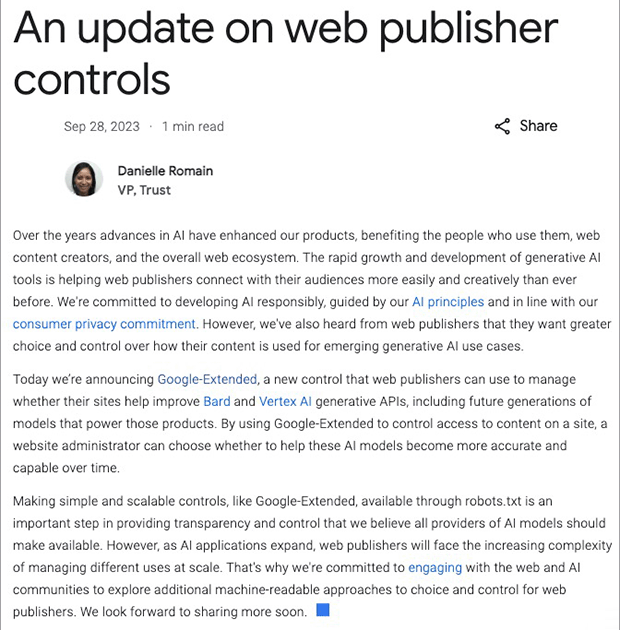

An update on web publisher controls:

“Over the years advances in AI have enhanced our products, benefiting the people who use them, web content creators, and the overall web ecosystem. The rapid growth and development of generative AI tools is helping web publishers connect with their audiences more easily and creatively than ever before. We’re committed to developing AI responsibly, guided by our AI principles and in line with our consumer privacy commitment. However, we’ve also heard from web publishers that they want greater choice and control over how their content is used for emerging generative AI use cases.

Today we’re announcing Google-Extended, a new control that web publishers can use to manage whether their sites help improve Bard and Vertex AI generative APIs, including future generations of models that power those products. By using Google-Extended to control access to content on a site, a website administrator can choose whether to help these AI models become more accurate and capable over time.

Making simple and scalable controls, like Google-Extended, available through robots.txt is an important step in providing transparency and control that we believe all providers of AI models should make available. However, as AI applications expand, web publishers will face the increasing complexity of managing different uses at scale. That’s why we’re committed to engaging with the web and AI communities to explore additional machine-readable approaches to choice and control for web publishers. We look forward to sharing more soon.”

Danielle Romain, VP, Trust at Google (The Keyword)

Will Publishers Embrace Google-Extended?

With Google’s Extended, publishers can continue to get scraped and indexed by crawlers like Googlebot, but their data will not be used to train AI models as they develop over time. This is a welcome update from Google, as it gives website owners more control over how their content is used to train AI models.

What are you going to do with your website? Allow AI to use it for “training” or block AI? Do you think Google might secretly demote the SEO from sites that block AI from search rankings?

Google-Extended Now Allows Website Owners To Block AI From Accessing The Content On Your Site #GoogleExtend #RobotsTXT #GoogleBard #VertexAI #WebSearch #ContentOwnership #AI #AISEO Share on X

Private investor. Tech enthusiast. Broadcast TV veteran.

20 Funny Autocorrect Jokes

20 Funny Autocorrect Jokes

Leave a Reply